Hackers Are Using AI: The Threat Landscape for Businesses

In 2026, AI is a dual-edged sword helping defenders detect attacks and fueling the most sophisticated cyber threats ever seen. While generative models like Google’s Gemini and Anthropic’s Claude are designed for productivity and automation, cybercriminals have found ways to use them to scale attacks, personalize scams, and create adaptive malware that learns as it spreads.

Why AI Changed the Cybercrime Game

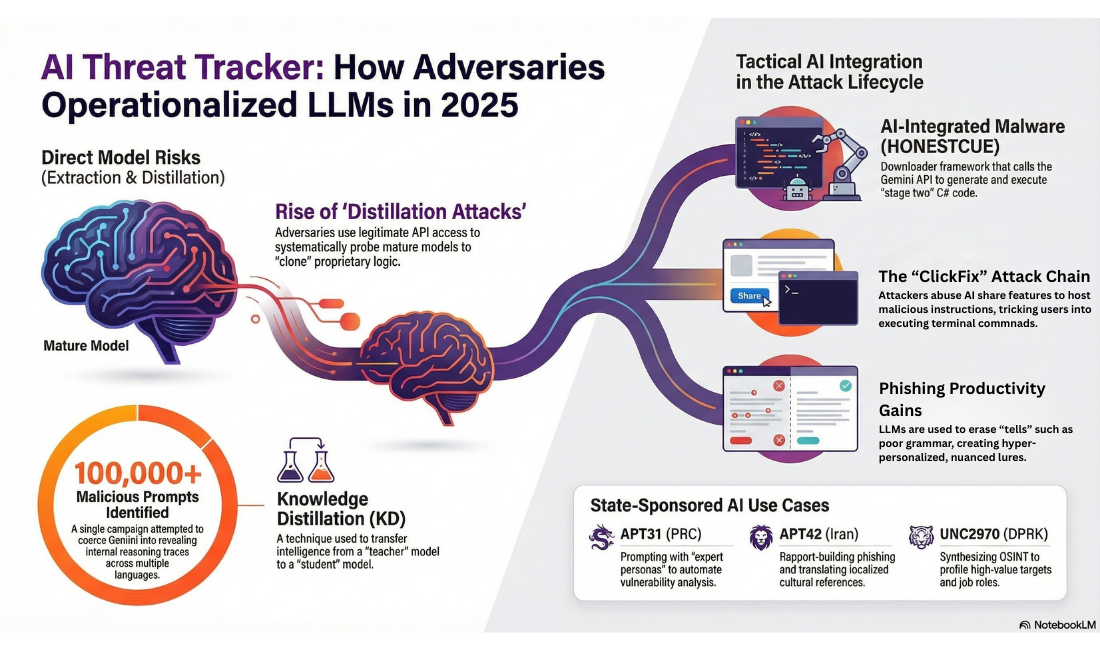

AI gives attackers something they’ve always wanted, the ability to scale with speed. Instead of manually crafting phishing emails or spending hours probing for vulnerabilities, modern threat actors now automate steps using large language models (LLMs) and intelligent agents. According to recent threat intelligence reports, state-sponsored hacker groups are using AI to augment all phases of the attack lifecycle from reconnaissance and phishing to malware development.

One of the most worrying aspects?

Many of these tactics don’t require advanced technical skills anymore, which lowers the entry barrier for cybercriminals. It turns cybercrime into something closer to “hack-by-subscription” and off-the-shelf kits than the stereotypical hoodie-in-a-basement image we’re used to seeing.

Real AI-Driven Attack Case Studies

1. Deepfake CEO Scam with Malware Deployment

One of the most dramatic examples came recently from a campaign targeting the cryptocurrency sector. North Korean-linked hackers used AI-generated deepfake video calls to impersonate trusted executives during fake Zoom meetings. During these fraudulent calls, victims are convinced to install what they thought were “security updates,” but were malware installers.

In this case, the malware suite included several components designed for persistence, credential theft, data exfiltration, and long-term compromise. This campaign shows how attackers can combine social engineering, AI synthesized media, and malware deployment into a seamless attack chain.

2. Raiders Using Gemini to Scale Phishing and Malware Operations

Google’s Threat Intelligence Group (GTIG) reported that threat actors are using its Gemini AI model to craft tailored phishing campaigns, research specific targets’ weaknesses, and even generate code that is harder to detect by traditional defenses.

What makes this so dangerous is that attackers are no longer limited to generic templates. They can now include personalized data, mimic communication styles, and speak multiple languages fluently meaning that a phishing email can look almost indistinguishable from a legitimate internal message.

3. Phishing and Malware Kits with Adaptive Code

Emerging malware is being engineered with AI assistance so that they can rewrite parts of their code, helping them evade detection from antivirus or endpoint security tools. Some malware even connects to cloud-based AI services to generate new obfuscation techniques during every execution cycle.

The bottom line? Your legacy defenses might see “just another process” on a system, while it’s constantly changing itself and avoiding signature-based tools.

4. AI-Powered “Phishing as a Service”

Dark web markets have begun offering phishing kits that leverage AI to generate context-aware message content and deepfake audio all for sale on subscription. These tools generate highly convincing lures using publicly available data about employees or executives.

This “Phishing-as-a-Service” model works eerily like popular streaming services basic, pro, and enterprise subscription tiers depending on the target sophistication. It’s a chilling parallel to Netflix, but instead of watching Stranger Things, subscribers get Scam-Your-Employees streaming live.

Why Small Businesses Are Especially at Risk

Small and medium-sized businesses are becoming prime targets for two main reasons

They often lack sophisticated defenses

Many SMBs use basic antivirus and firewall tools but lack advanced threat detection or full-time security teams. Attackers know this and have begun focusing on these weaker targets first.

The human element is the weakest link

AI-generated phishing and deepfakes make attacks far more believable. Even well-trained teams can be fooled by dynamic content that changes in real time. This makes defensive training and incident response plans crucial the topic of our guide on incident response plans you can implement today.

How Attackers Use AI A Breakdown

Here are the main ways attackers harness AI today

- Automated Reconnaissance – AI scans networks, identifies vulnerable systems, and prioritizes targets.

- Personalized Phishing – AI writes messages tailored to the victim’s social graph, job title, and language style.

- Deepfake Media – Voice and video fakes turn trust into a weapon.

- Adaptive Malware – Malware that continuously rewrites itself to avoid detection and maximize persistence.

- Dark Web Subscription Services – AI used to offer malicious tools on demand.

Defense Against AI-Driven Threats

If attackers can use AI, defenders must do the same and then some. Here’s how organizations, especially small and medium-sized businesses, can up their game:

· Train and Test Your People- Implement automated phishing simulations. Humans are still key; AI attacks often rely on fooling people, not machines.

· Use Advanced Security Tools- Invest in AI-powered threat detection, endpoint protection, and anomaly monitoring. These tools can help detect behavior that doesn’t match normal patterns.

· Follow Cybersecurity Standards-If you haven’t already, adopting a standard like NIST 800-171 gives you a structured foundation to reduce risks. Learn more about NIST 800-171 guide.

Have a Response Plan Ready

You should assume a breach is going to happen, not hope for prevention only. A good incident response plan helps minimize damage and restore operations faster.

The Future of AI in Cybercrime

Industry predictions are clear AI will continue to fuel both malicious and defensive capabilities. Companies will start treating AI systems as potential insider threats since compromised models or agents can act on behalf of attackers.

The arms race is in full swing. For every AI model used to generate an attack, there will be an AI model fighting to stop it.

Don’t Wait Until It’s Too Late

AI-driven attacks are no longer science fiction. They’re real, active, and evolving every day. Whether your business is a startup, a mid-sized company, or a growing enterprise, now is the time to act.

- Review your current cybersecurity posture

- Train employees on modern social engineering threats

- Partner with experts who understand both AI and security

Final Thoughts

If you’re unsure where to start, talk to a team that gets it. Whether you need robust threat detection or an incident response plan, 4BIS is here to help you secure your business in a world where attackers are increasingly using AI. Contact us to get started on strengthening your defenses today.